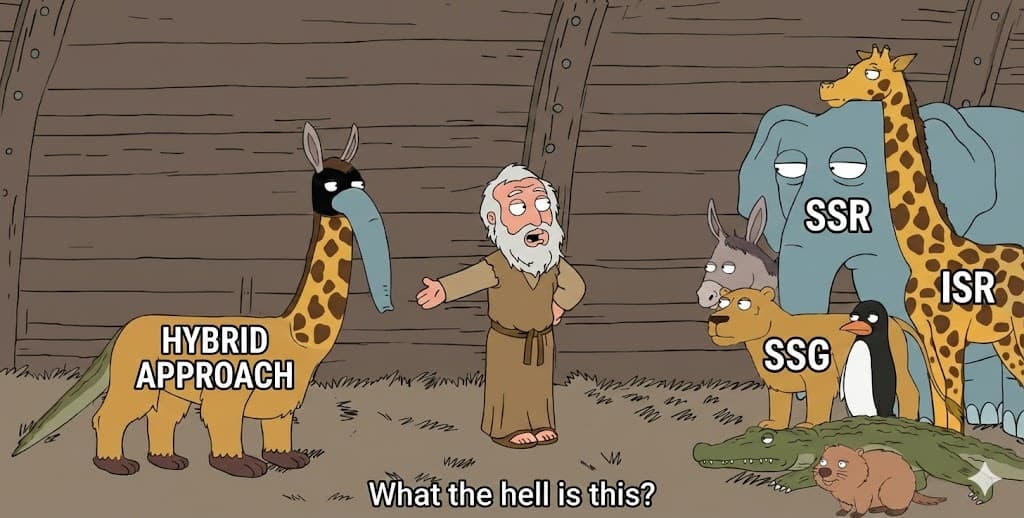

SSR vs SSG vs ISR - Part 1: The Request (SSR)

How It Works and When to Use

When I started this experiment , I thought SSR was simple:

“SSR renders on every request.”

But when I tried to observe it in practice, something clicked:

All the diagrams in blogs and docs tell you what,

but none tell you why it feels slow or what the browser actually waits for.

So I set up a simple route in my test project:

/pages-ssr // For Pages Router SSR

/app-ssr // For App Router SSR

Both do the same thing:

simulate an 800 ms delay

fetch “data”

return a timestamp

render a simple UI

Everything else remains constant.

Same UI.

Same data.

Same delay.

Only the rendering strategy changes.

SSR — Not Theory, But What Happens

Here’s what I observed in DevTools:

Open Chrome DevTools → Network

Filter by Doc

Reload

/pages-ssrClick the document request

Go to the Timing tab

Look at Waiting for server response

This is the important part:

You’ll see:

Waiting ~800 ms

Content download ~negligible

Timestamp changes on every refresh

The browser waits until the server is done doing everything — data fetch, render, HTML generation — before it ever gets a byte of HTML.

That’s what SSR really means.

The server is doing work synchronously before responding.

No pre-rendered file.

No caching.

Just work on every request.

In the old blog posts you’d hear:

“SSR increases TTFB.”

But Time to First Byte (TTFB) didn’t become meaningful to me until I saw it in the timing breakdown:

📌 The server doesn’t send HTML until rendering completes.

📌 The browser genuinely waits.

📌 Most of that wait is server compute.

That’s the real mechanism.

Why SSR Isn’t Just a Buzzword

To understand why this matters, consider what SSR promises:

- Fresh data on every request

And what it costs:

You pay the rendering cost each time

You pay it before the browser gets HTML

That cost shows up as TTFB

In my experiment:

Waiting (TTFB): ~800 ms

Content Download: ~0.5 ms

Look at that green bar in the timing tab. That is total server compute.

No network, no JS. Just server work before response.

That’s SSR.

If you refresh 10 times, the timestamp and timing stay consistent — because every request triggers a new render.

Pages Router vs App Router — Same Outcome, Different API

In the Pages Router, we force SSR using:

export async function getServerSideProps() { … }

In the App Router, we achieve SSR using:

export const dynamic = "force-dynamic";

Different syntax.

Same real behavior.

From the browser’s perspective:

HTML is generated per request

The timing breakdown is the same

TTFB reflects server work before response

The difference is just how Next.js expresses it.

What I Learned About SSR

Here’s the important insight:

SSR is not about React.

SSR is about when HTML is generated.

You can use it when:

You must guarantee fresh data every time

You cannot cache at any level

Every user needs up-to-the-millisecond correctness

But you pay for it by:

Blocking the request

Increasing TTFB

Executing server compute per request

That’s the real SSR execution model — and now we can measure it.

In the next Part, we’ll flip the model completely.

We’ll move the rendering cost out of the request and into the build.

And that change — from per-request to pre-generated — will change everything.